Thursday, August 17, 2023

Upgrading from IBM Installation Manager 1.8.x to 1.9.x

Thursday, July 13, 2023

Securing the Netcool EWS Probe

Background

Solution

The steps are taken from this link https://docs.microsoft.com/en-us/graph/auth-limit-mailbox-access.

Due to security concerns highlighted by customer, A Doc APAR to add the following steps into probe guide has been raised.

APAR number : IJ41418

Limiting probe access to specific Exchange Online mailboxes.

By default OAuth authentication enables the probe to access all mailboxes in an organization on Exchange Online. Administrators can identify the set of mailboxes to permit access by putting them in a mail-enabled security group. Administrators can then limit probe access to only that set of mailboxes by creating an application access policy for access to that group.

a. Create a new mail-enabled security group using steps in this link or use an existing one and identify the email address for the group.

b. Add the user of mailbox to be accessed by probe into the group.

c. Connect to Exchange Online PowerShell. For details, see Connect to Exchange Online PowerShell.

d. Create an access policy on the registered Azure Active Directory application.

New-ApplicationAccessPolicy -AppId <<Application/ClientID>> -PolicyScopeGroupId <<SecGroupEmail>> -AccessRight RestrictAccess -Description "IBM Netcool EWS Probe Mailbox"

Tuesday, April 18, 2023

Configuring the Prometheus JSON Exporter to Parse a JSON Array

Background

The Prometheus JSON Exporter allows you to parse arbitrary JSON data into Prometheus metrics. You'll even find some examples at the link. The problem is that all of the examples show a single JSON object. What is the syntax supposed to be if you're dealing with JSON that is an array, like this data? This question came up on Reddit.

Solution

The solution is to specify the path as:

path: '{[*]}'

That's it. That will return the entire array as a list, which is what's needed to have the JSON Exporter loop through it.

Here's a link to the github gist with more details about how you can use the above information.

Monday, April 10, 2023

I bet you don't fully understand the power of a CI/CD pipeline

If your team is delivering something digital, you MUST use a CI/CD pipeline.

I'm sure you've heard of CI/CD (Continuous Integration/Continuous Development) pipelines, but I bet a lot of you don't truly understand how powerful they can be. Before the other day, I basically understood their power, but then I submitted my first Pull Request to a huge open source project and was simply blown away. A Pull Request (I hate the name because the words don't make sense to me, but that's the name) is a mechanism for a developer/contributor to notify team members that they have completed a feature or change. So it's really a request to merge a change into the code base.

In my case, I was reading the Grafana Agent documentation and saw an error that bugged me. There was an incorrect statement in the technical description. The wrong label was specified. I've run across this type of error in numerous vendor documents, so I'm used to it, but ti still gets me every time I come across one. The difference here was that the error was close to the bottom of the page, and at the bottom was this group of links:

So I clicked on "Suggest an edit" and was taken to the Github repository storing the docs. I already had an account, so I made the small change I needed, and it automatically created a new branch for me with the change and prompted me to make a Pull Request. So I did that, and it let me know that the first issue was that I needed to sign the Contributor License Agreement, and it provided a link to that. I signed the agreement, and the pull request automatically got put into a "Needs Review" state and was assigned to one of the maintainers. So I figured "Well, I did something good. Maybe that update will show up on the website one day, eventually". A couple of hours later I got an email stating that my pull request was reviewed, approved, and merged into the main trunk. So I figured I would check the Grafana Agent page for grins, AND MY CHANGE WAS THERE, LIVE ON THE SITE!

Now for my "bigger picture" opinion on this:

In working with large software vendors, I have made similar change requests that took me hours to complete and that were NEVER implemented in the product documentation, so I was completely amazed. After going through this process, it is my strong opinion that any company that provides documentation for their products should have a publicly available repository that allows public contributions. I realize that the legalese for any particular Contributor License Agreement would need to be ironed out, along with many other details. Or there could be a restriction that updates are allowed only by Business Partners (who have already signed numberous documents). My point is that a huge number of extremely useful updates could be crowdsourced in this way.

Thursday, March 30, 2023

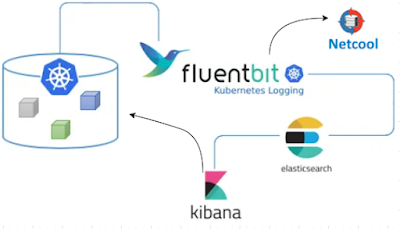

Sending Kibana (free/open source) Alerts via Webhook Using Fluent-Bit (free)

Background

My Solution

Wednesday, March 29, 2023

Tunneling X11 over SSH as a different user

Background

Solution

Ensure X11 tunneling is configured for your session:

Open the session (connect to the remove system) and ensure that your xauth exists and your local display is set so you can get your MIT-MAGIC-COOKIE:

[franktate@linux1 ~]$ echo $DISPLAY

localhost:10.0

[franktate@linux1 ~]$ xauth list | grep :10

linux1.gulfsoft.com/unix:10 MIT-MAGIC-COOKIE-1 a229706ccb496af61501ea25a95488

[franktate@linux1 ~]$

Note how your display number is used to identify the appropriate MIT-MAGIC-COOKIE

Ensure that an X application can connect to your Windows X server by running xterm or some other application.

Switch users and set the MIT-MAGIC-COOKIE:

[franktate@linux1 ~]$ su - db2inst1

Password:

-bash: TMOUT: readonly variable

[db2inst1@linux1 ~]$ xauth add linux1.gulfsoft.com/unix:10 MIT-MAGIC-COOKIE-1 a229706ccb496af61501ea25a95488

[db2inst1@linux1 ~]$

Run xterm or some other X application to be sure X is tunneled correctly. Assuming that works, now connect from the first machine to another.

SSH to the next hop host and get your MIT-MAGIC-COOKIE

[db2inst1@linux1 ~]$ ssh -Y frank2@linux2

frank2@linux2's password:

Last failed login: Sat Feb 23 16:17:29 EST 2019 on pts/0

[frank2@linux2 ~]$ echo $DISPLAY

localhost:10.0

[frank2@linux2 ~]$ xauth list | grep :10

linux2.gulfsoft.com/unix:10 MIT-MAGIC-COOKIE-1 2d31b43034bfc9da1c0d2848c1b718

[frank2@linux2 ~]$

Run xterm or some other X application to be sure X is tunneled correctly.

Switch users and set the MIT-MAGIC-COOKIE

[frank2@linux2 ~]$ su - db2inst1

Password:

[db2inst1@linux2 ~]$ xauth add linux2.gulfsoft.com/unix:10 MIT-MAGIC-COOKIE-1 2d31b43034bfc9da1c0d2848c1b718

Run an X application like xterm to validate that it's working.

Modify kibana.yml after deploying Kibana with Helm

kubectl exec --stdin --tty kibana_podname -- /bin/bash

you'll find that there's no editor available (like vi or even ed). You can cat config/kibana.yml, but the comments state that it is auto-generated. So what are you supposed to do to add an a setting to the file? For example, you might need to add a value for xpack.encryptedSavedObjects.encryptionKey so you can configure alerting.

The solution I came up with is a multi-step process:

1. Get the default values.yaml file for the chart and store that in a file with the command:

helm show values elastic/kibana > /tmp/kibana.yaml

2. Edit that file to add a section for kibana.yml under kibanaConfig. Originally, kibanaConfig is empty (set to {}). You need to change it to be something like:

helm uninstall kibana

3. Then install the helm chart again with:

helm install kibana elastic/kibana -f /tmp/kibana.yaml

And that's it. Your changes will be applied and you're good to go.

I'm pretty sure there's a way to create a configMap and reference it, which would then allow you to just delete the pod to have it re-read the configMap, but I haven't figured out those exact details. Maybe in another post.