Downloads

Get ready for another big download. To download the media for Windows, it was approximately 15GB. I also downloaded the Linux media, but really they do share a bunch between the various OS installs, so this was only another 5GB. The IBM document for downloading is located at http://www-01.ibm.com/support/docview.wss?uid=swg24020532

Installation

For now, I have only done the Windows based install as I was more interested in the actual product usage rather than fighting with an install at this time. Little did I know that the install would be a bit of a fight.

I decided to go with the default install for now just to get it up and running. So I brought up my trusty Windows 2003 SP2 VM image and started to prep it for the installation. Since the disk requirements were more than I allocated to my C drive, I created a new drive and allocated 50GB for an E drive. I then extracted the images to the E drive and started installing. After a few pages of entering information, I started to get failures. After checking around and asking IBM support, it turns out the default install is only supported on one volume (this is in the documentation, but not really clear as I was installing everything on one “volume”, the E drive). So I had to increase the C drive space (thanks to VMware Converter) and start again. After that the install went fine. It took about 5 hours to complete.

Start Up

Starting TPM is not much different than it was in the 5.x versions. There is a nice little icon to start and stop TPM. The only issue I had is that the TDS (Tivoli Directory Server) does not start automatically. This means that you cannot log in! You have to manually start the TDS service. I am sure that this has something to do with startup prereqs, but have not investigated right now.

New User Interface

Because I have used TSRM (Tivoli Service Request Manager), the interface was not that foreign to me, but still way different than the 5.x interface. I find that it is not as fluid as the old interface but is way more powerful and flexible. With a proper layout, I am sure that it can be way easier to navigate than 5.x.

One thing that does bug me is that Internet Explorer is the only supported browser. I have been using Firefox and everything seems to work fine except for the reports (could be just my setup)

The new interface has tabs which are called Start Center Templates. These start Centers can be setup to provide shortcuts into the application that are specific to the function of the user. So if you have someone that is an inventory specialist, you can create a layout that will provide quick access to everything they need to perform their job rather than displaying what they do not need like workflows and security. A user/group can also be assigned multiple Start Center templates. This is useful for using existing templates created for various job functions and providing them to a user whose role spreads multiple areas.

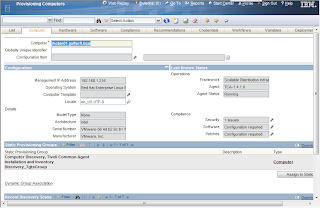

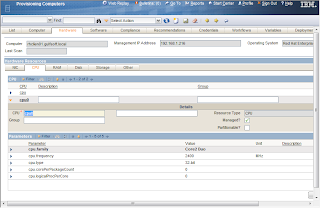

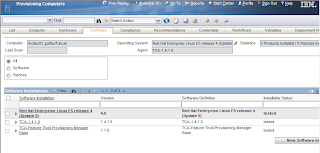

Discovery

There is something new called a Discovery Wizard. These wizards can perform multiple functions for discovery rather than the one step discovery (RXA discovery or inventory). For example, one wizard is called Computer Discovery, Common Agent Installation and Inventory Discovery. This discovery does exactly what it says. The first step will be to discover the computer using the RXA discovery, then once that is completed, install the TCA on the discovered systems, and then do an inventory scan of the systems. Worked pretty nice. The only issue I had is that the default setting for the TCA is to enable the SDI-SAP. This means that in order to perform the inventory scan, the SDI environment needs to be configured first. Either that, or change the global variable TCA.Create.EO.SAP to false.

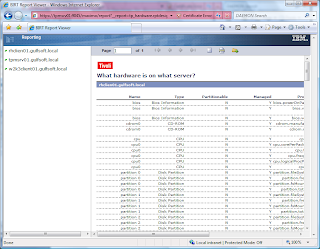

Reporting

Reporting for 7.1 is WAY different than 5.x. TPM now uses BIRT to provide reporting instead of the DB2 Alphablox. Now there is good and bad with this new reporting method. The bad thing is that it is quite a bit more complex than the 5.x reports. The 5.x was pretty simple to use and create a report. The good thing is that BIRT is quite a bit more flexible and is being standardized across the various Tivoli products (Tivoli Common Reporting is BIRT with WebSphere) so the experience from one product can be shared across all.

Another note is that reports are no longer used for the dynamic groups. Dynamic groups use something called a query (I think I will follow-up on this in a future blog).

General Comments

The new version of TPM is looking very promising. The interface is very flexible/customizable, which is something that the old interface was not. The old interface did seem to flow a bit better by allowing the opening of new windows and tabs by right clicking on an object. This is not something that can be done in the new interface. The old one was specifically designed for the job it had to do, but really could not do much more, where the new interface will allow for the ability to expand beyond just TPM functions. This new interface is the same one shared by TSRM, TAMIT, TADDM and many other Maximo products.

Now we just need to see what will happen with the new version of TPMfSW (possibly named TDM or Tivoli Desktop Manager) to see what the full direction will be. One other thing that I know is on the list is the new TCA. This was something that I was hoping would be squeaked in (it was not promised for this release) but hopefully the 7.1.1 release will.

If you have any comments or questions, please fire them off and I will see what I can do to answer them.